Blog

Forecasting and Improvement Through Deeper Kanban Metric Analysis06 May 2026

A team relying on a digital Kanban board with automatic progress tracking quickly learns the definitions of throughput, lead time, and cycle time. Team members see them tracked by the system, and consider what the numbers may be suggesting. However, raw values usually only scratch the surface of the matter; the real value emerges when flow metrics are analyzed over time: when trends, anomalies, and correlations become subjects of interrogation, not only reporting. That’s the point at which they evolve from numbers to signals.

Beyond counting completed items

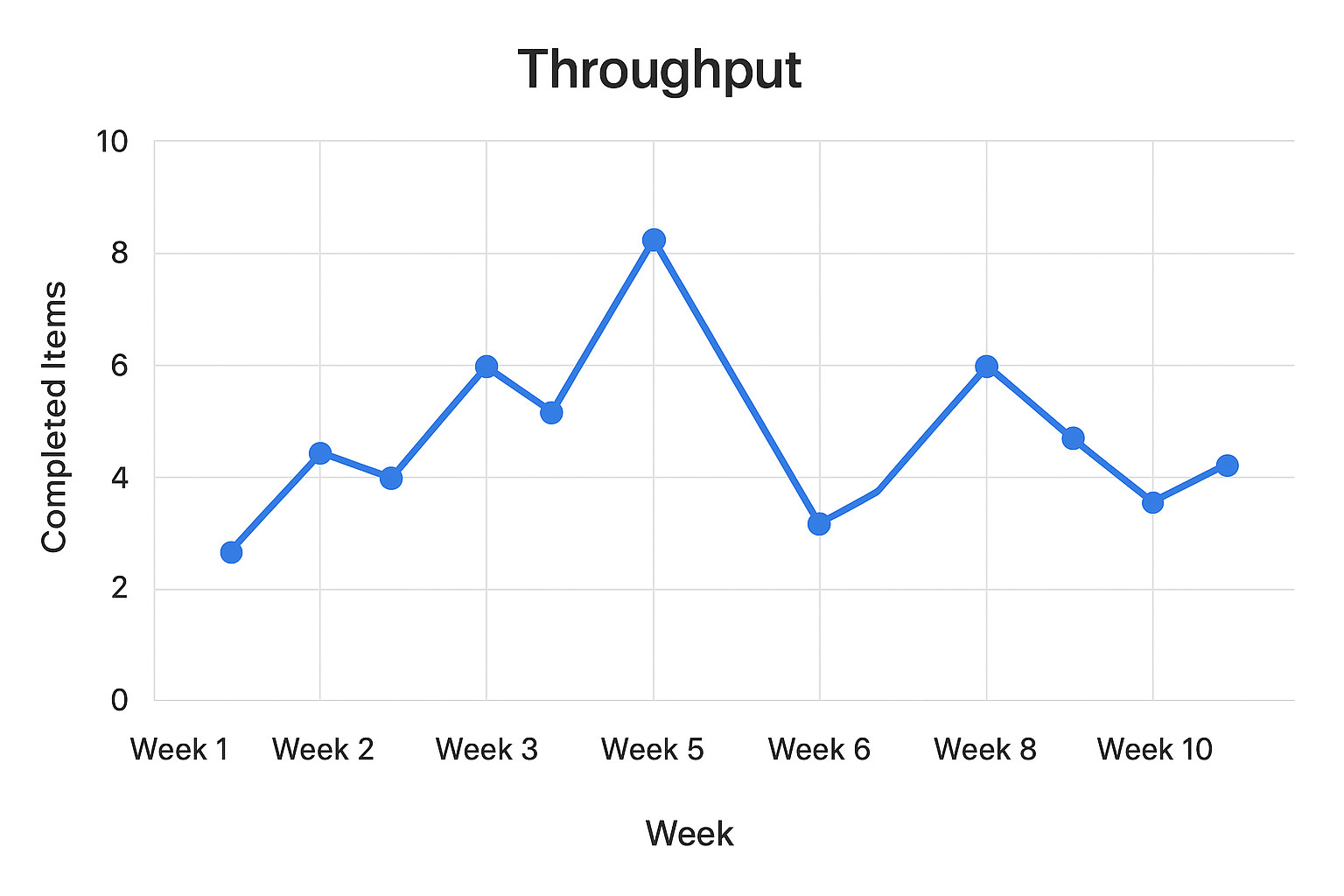

On the surface, throughput may appear to be little more than a tally of how many items a team delivers in a set time. Considering it as such is limiting, because a single number hides volatility. Plotting throughput on a run chart, however, can reveal much more: a sharp spike might point to a batch release, a sudden dip could reflect a systemic bottleneck.

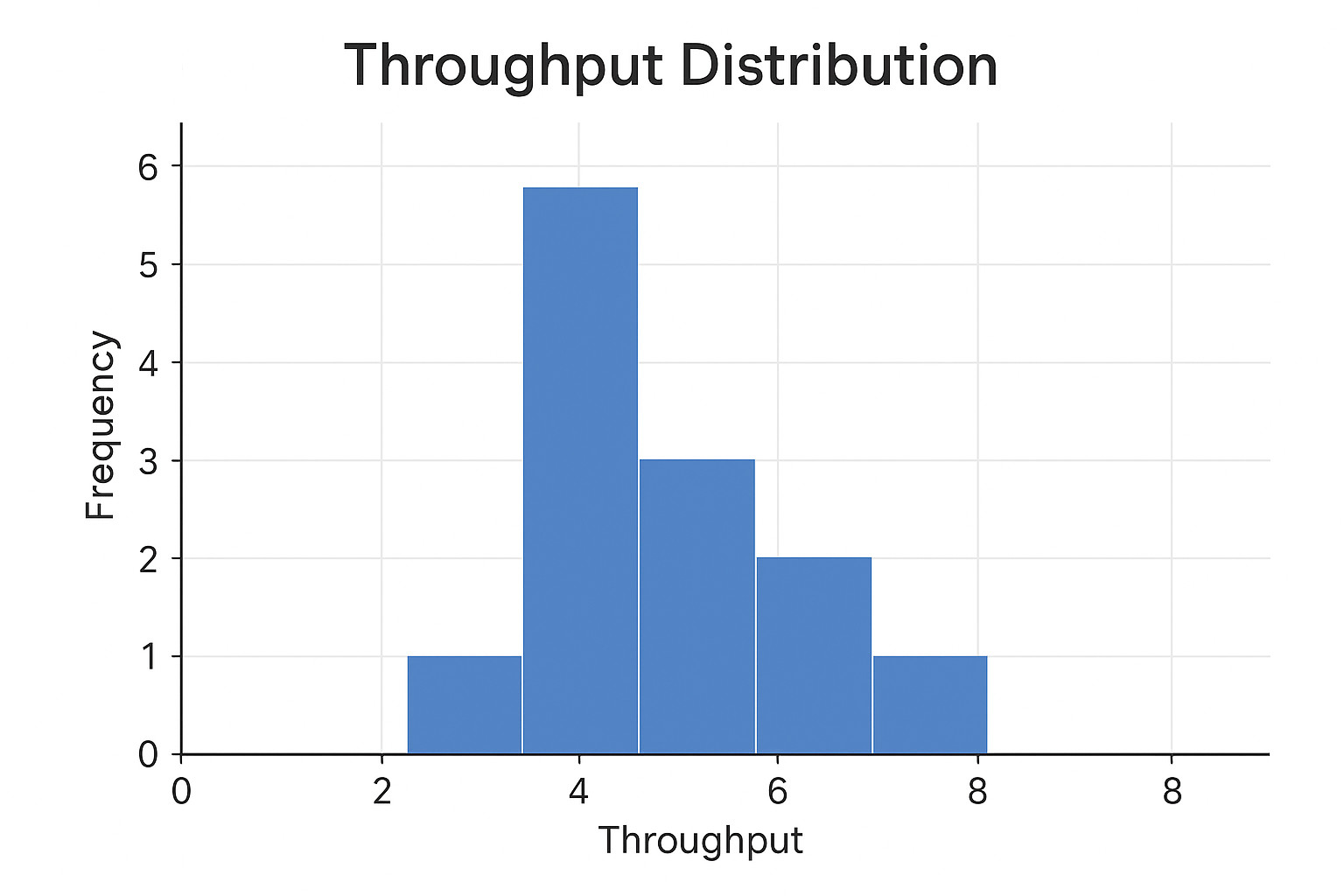

A further revealing picture comes from examining the distribution. For example, is throughput consistently clustered around a median, or does it oscillate? Stable throughput will be indicative of predictable delivery capacity, while erratic throughput values would suggest hidden dependencies, uneven demand, or workflow frictions. Stable throughput supports reliable capacity planning, whereas recognizing and analyzing erratic throughput is key to diagnosing systemic issues.

Detecting patterns in flow

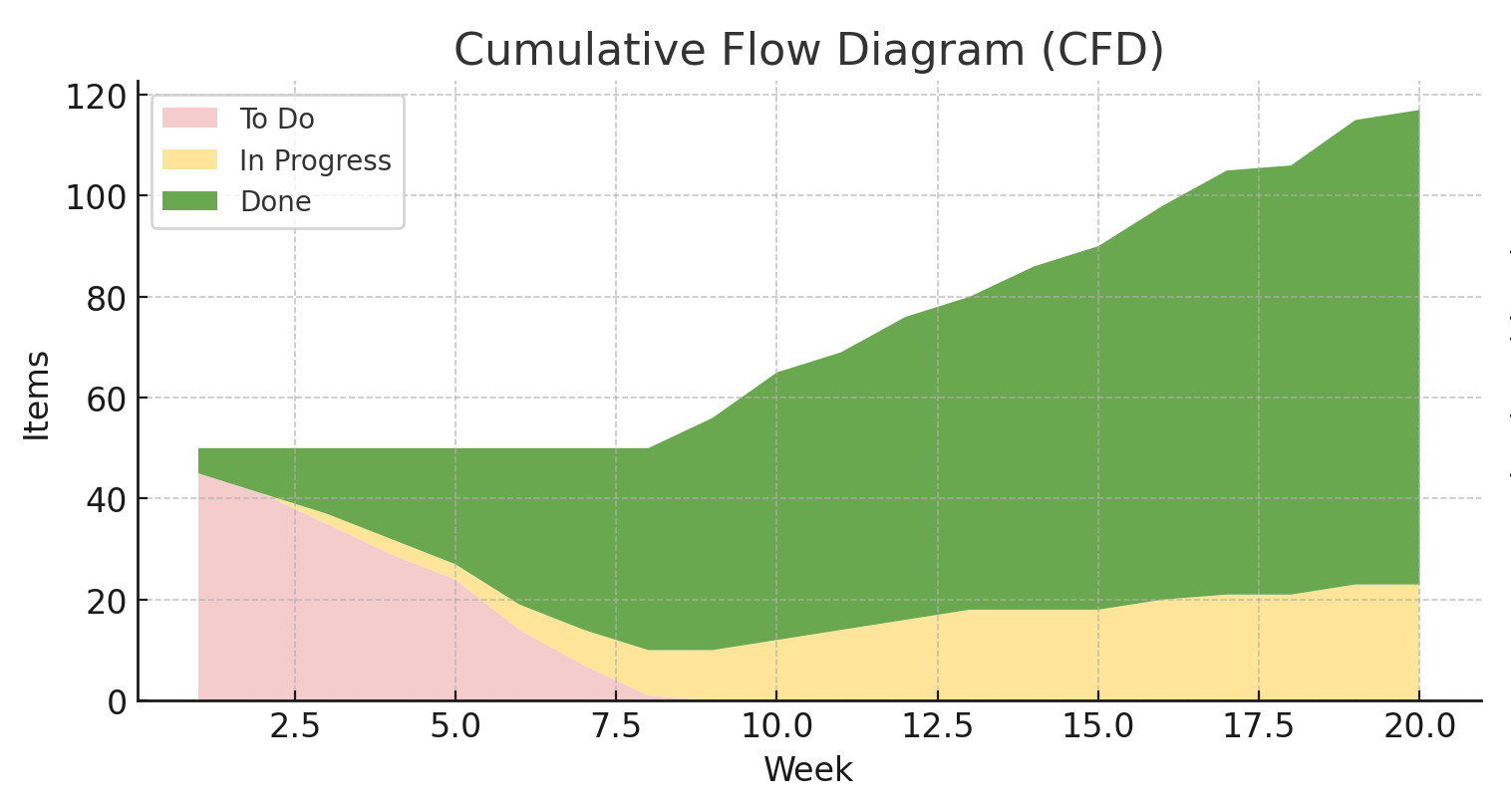

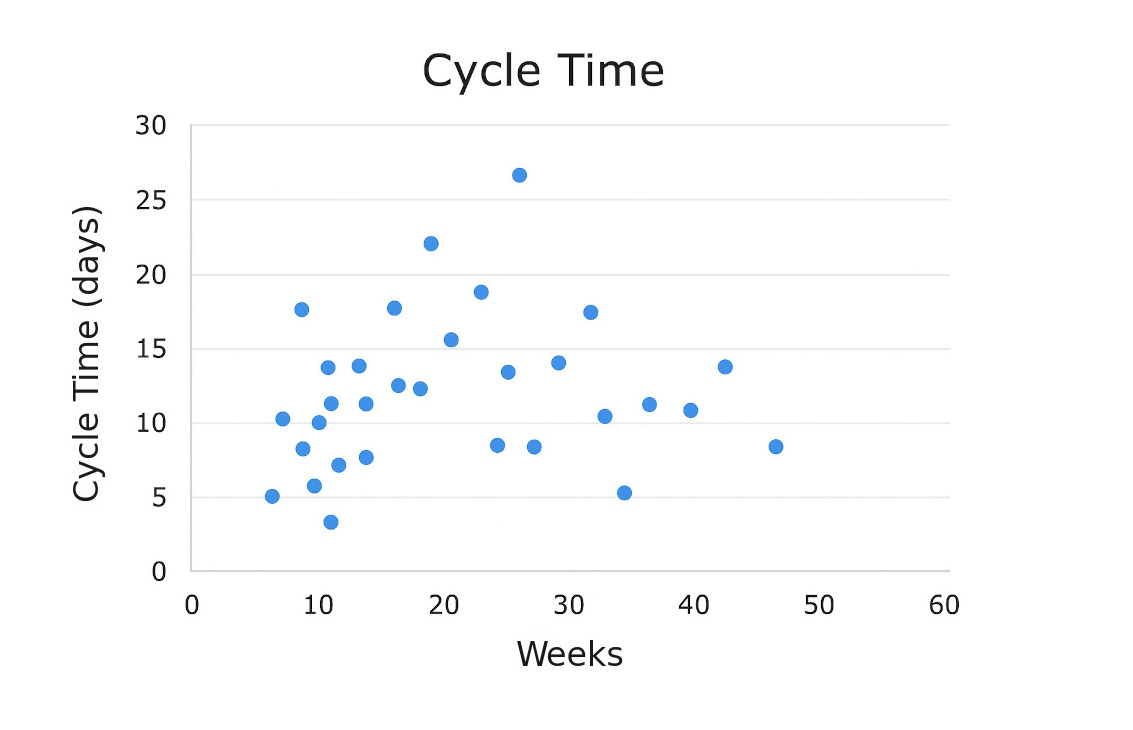

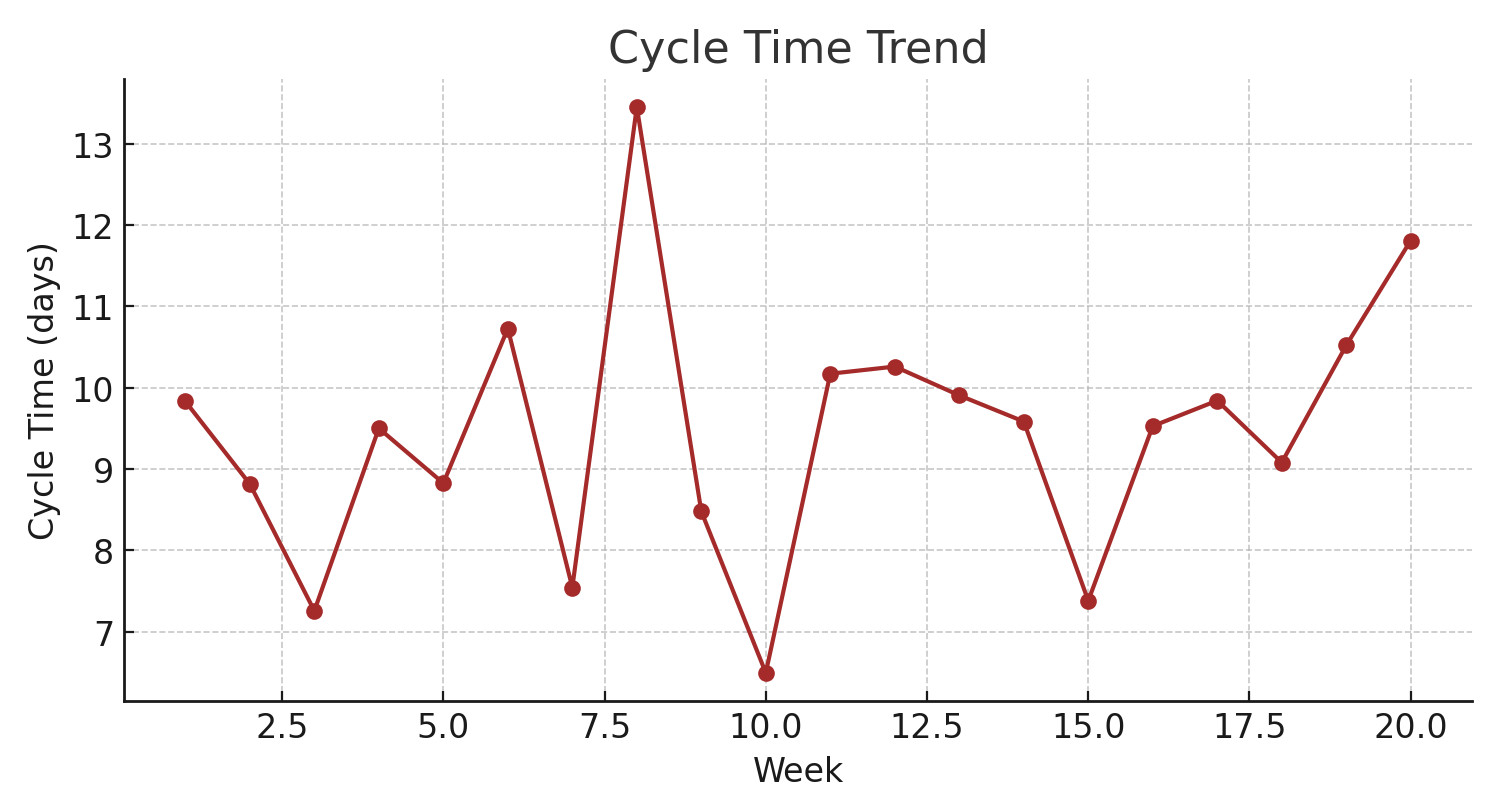

The lead time (request to delivery) and the cycle time (actual work start to delivery) typically receive attention only as single averages, which can be deceptive. Averages compress outliers into a neat number, concealing the factual experience of work. A cumulative flow diagram (CFD), or a cycle time scatter-plot, will help uncover whether items are reliably completed within a certain band or if there’s a long queue of delayed work.

Tracking these metrics as time series adds another layer of insight. For example, an upward tendency in average cycle time may not trigger alarms in a weekly snapshot, but over several months, it can indicate that work-in-progress policies are slipping, queues are extending, or that upstream quality may be deteriorating. Detecting such drifts may allow teams to intervene before delivery predictability collapses.

Where different metrics meet

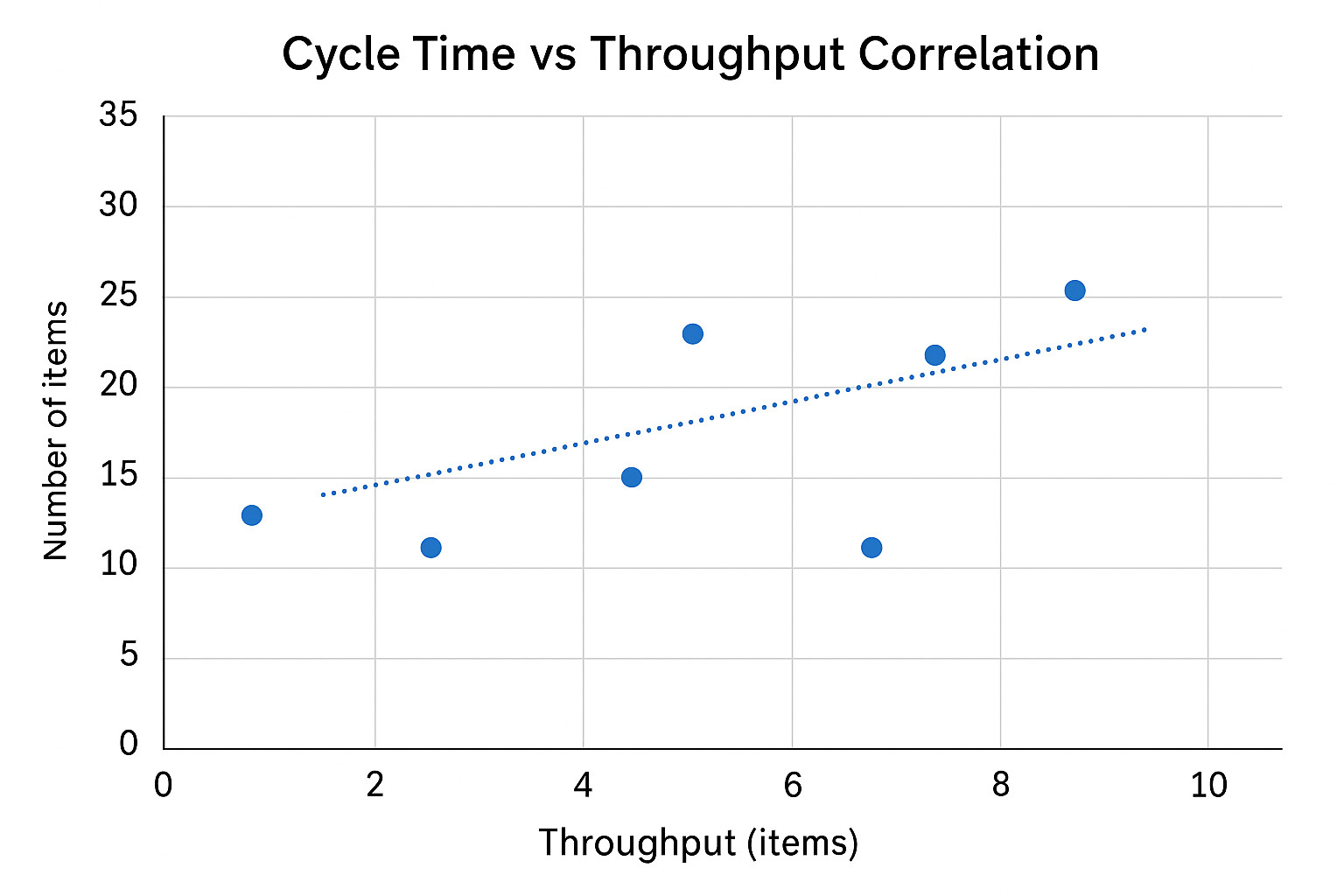

Big-time learning about your team's process begins when all metrics are compared; throughput and cycle time, for instance, are not independent. A sustained increase in cycle time without a corresponding drop in throughput may mean that the team is delivering more, but at the cost of pushing items through with higher effort or burnout risk. Conversely, a drop in throughput paired with rising cycle time could suggest that systemic bottlenecks are adding up.

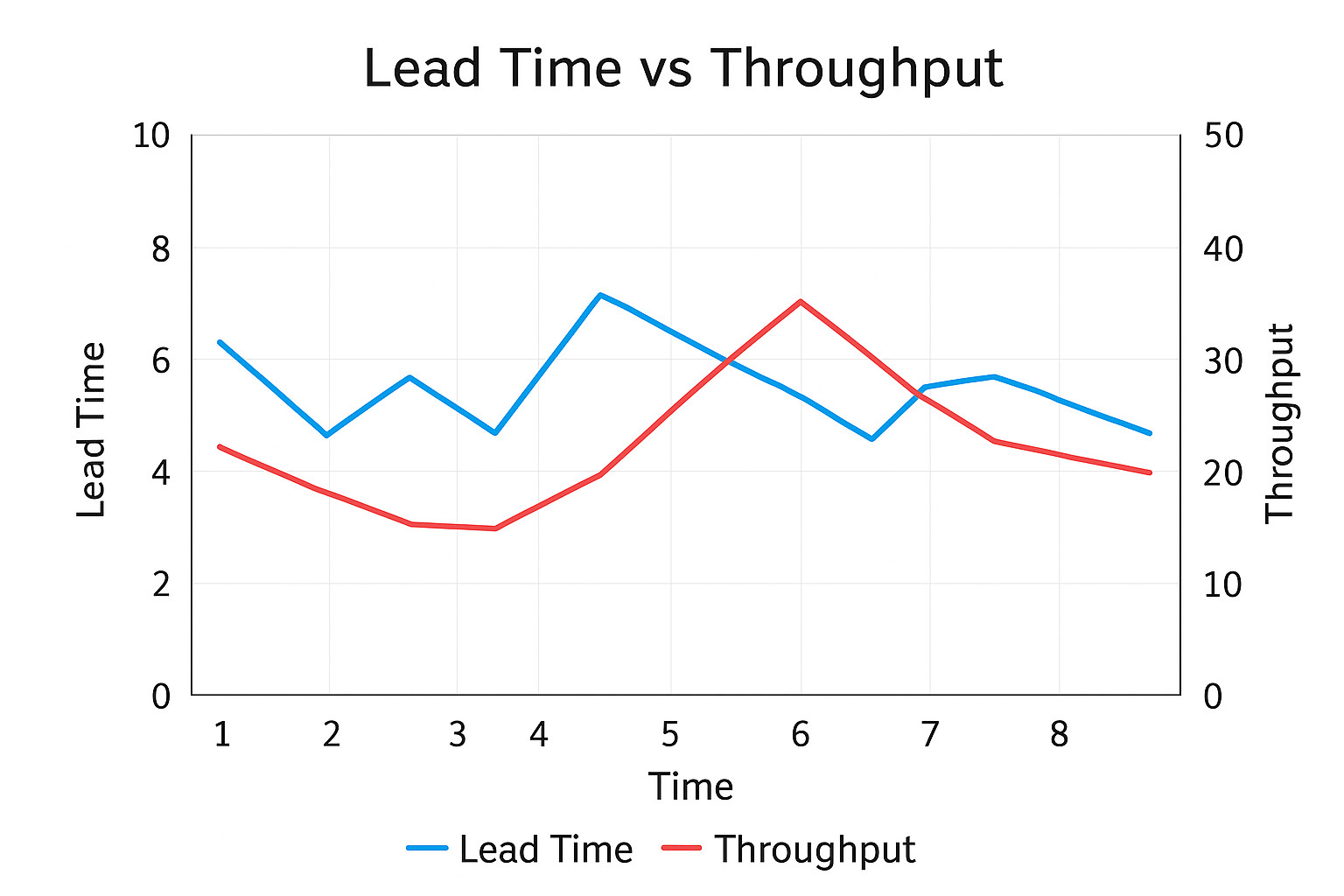

Lead time and throughput correlations can highlight demand–capacity mismatches; if incoming requests consistently outpace throughput, lead times inevitably grow. Recognizing such a pattern may prompt the team to start a conversation with stakeholders about rebalancing demand, setting more realistic expectations, or securing additional capacity.

Spotting anomalies and outliers

Not all anomalies are bad news, for instance, a sudden surge in throughput may reflect successful automation, and a sharp dip in cycle time could be the result of smaller, better scoped work items. What matters is asking why anomalies occur, over smoothing them away as noise. Outlier analysis can often expose systemic issues: a single task that sat in progress for three weeks could signal an upstream dependency that has silently constrained delivery for months up to that point.

Continuous improvement through flow insights

Perceiving process metrics as living signals shifts their role from a performance scoreboard to a feedback mechanism. A team that studies the shape of its throughput distribution, the drift of cycle time, or the correlation between demand and capacity gains a richer understanding of how work flows through the system. That, in turn, enables targeted experiments, such as adjusting WIP limits, or rebalancing staffing levels.

In the long run, these insights also support capacity planning. Instead of promising delivery on the basis of intuition or a single average, teams can rely on throughput distributions and cycle time bands to forecast with much higher confidence. This increases both predictability and credibility with stakeholders.

The deeper discipline of flow

Metrics aren't answers, but questions in numeric form. When teams learn to treat them as signals in conversation with each other - and as evolving trends rather than static snapshots - they develop a sharper sense of their system’s health. The payoff is not (just) more charts to gather, it's a more disciplined ability to see, predict, and improve the flow of work.

Sign up for a 14-day free trial

to test all the features.

Sign up now and see how we can help

your organization deliver exceptional results.